Quick Answer

ServiceNow, monday.com, HubSpot, and Salesforce all announced AI agent platforms, promising autonomous AI that can plan, coordinate, and execute work without human intervention. But deploying AI agents on top of broken systems amplifies chaos instead of solving problems. Mid-market companies need clean data, defined processes, and operational discipline before AI deployment—the same prerequisites that no-code platforms required a decade ago. If your teams are using Excel instead of your CRM, fix that first. AI agents won’t fix broken systems; they’ll make them worse.

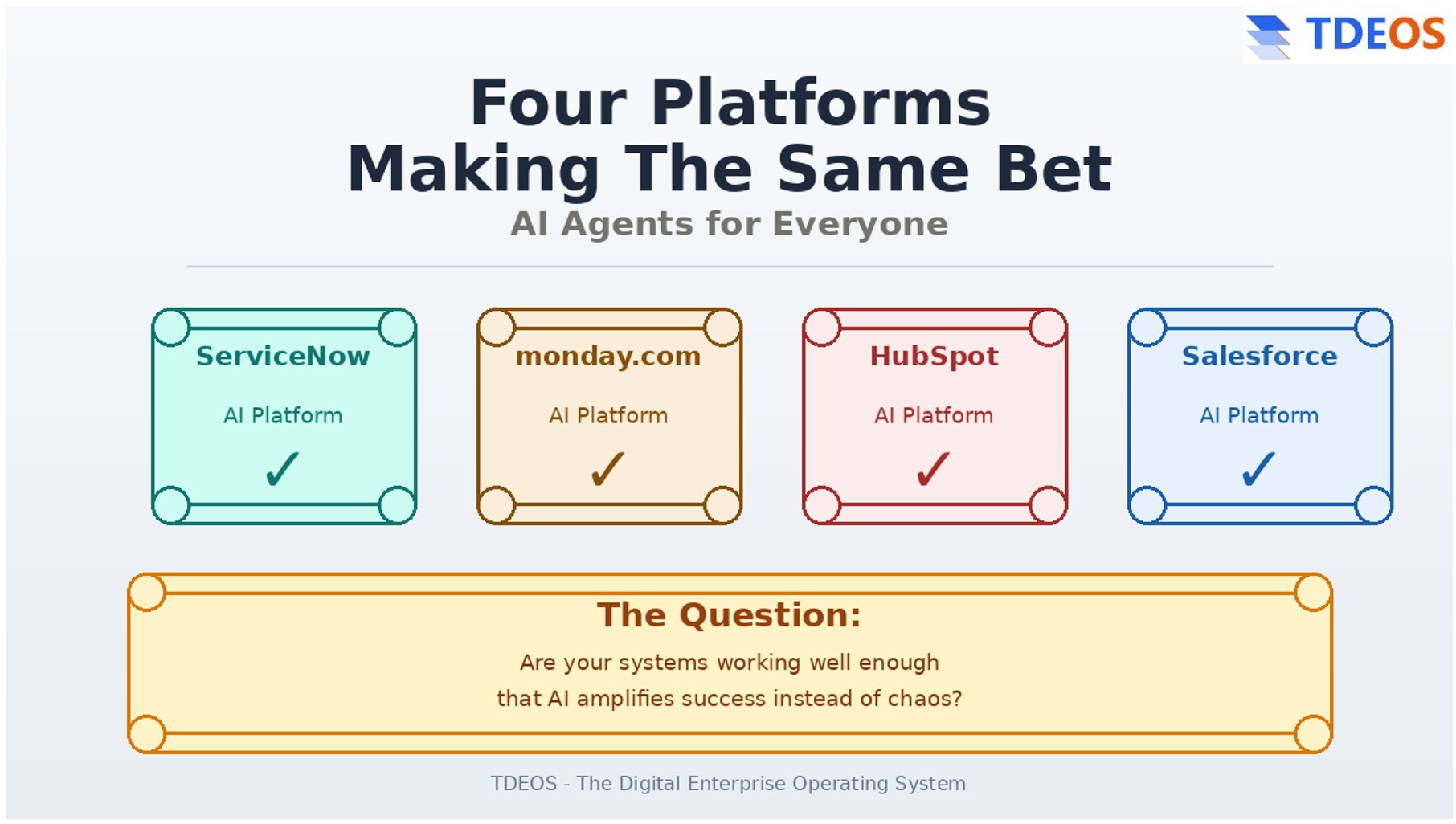

The Four Simultaneous AI Platform Announcements

In the week of May 6-12, 2026, four major enterprise software vendors made nearly identical strategic announcements:

ServiceNow (Knowledge 2024, May 7-9): Positioned itself as “the AI platform for business transformation” with autonomous AI agents that can reason through business processes.

monday.com (May 6): Announced the biggest rebrand in company history, repositioning as an “AI Work Platform” where people and AI agents collaborate.

HubSpot (Q1 2026 earnings, May 8): Positioned itself as “the agentic customer platform for scaling businesses” with AI agents handling customer interactions autonomously.

Salesforce (ongoing since 2025): Continued pushing Agentforce, its autonomous AI agent system that executes work across sales, service, marketing, and commerce.

All four are betting their entire platform strategy on the same concept: AI agents that can plan, coordinate, and execute work alongside human teams with minimal supervision.

The pitch is identical across vendors: Deploy AI agents. Automate workflows. Reduce manual work. Scale operations without adding headcount.

But there’s a critical prerequisite that nobody’s discussing.

Why AI Agents Require What System Drift Destroys

AI agents are not standalone tools. They’re automation layers that sit on top of your existing enterprise systems.

They learn from your data. They execute your workflows. They make decisions based on your processes.

Which means they require three things:

Clean data. AI agents learn patterns from historical data. If your sales team tracks real pipeline in Excel because they don’t trust what’s in the CRM, your AI agent learns from incomplete or inaccurate data. The predictions it makes will be confidently wrong.

Defined processes. AI agents automate workflows. If your operations team maintains shadow systems in Notion or Google Sheets because the official platforms don’t match how work actually happens, AI agents will automate the wrong workflows. You’ll get faster execution of broken processes.

Clear governance. AI agents make autonomous decisions. If nobody in your organization owns system health—if there’s no executive accountability for data quality, process alignment, or system governance—nobody will own AI agent supervision either.

System drift destroys all three prerequisites.

System drift is the gradual deterioration of enterprise platforms after implementation. Teams stop using the official system, build shadow workarounds, and leadership stops trusting the data. Within 12-18 months, the expensive platform becomes compliance theater while real work happens in spreadsheets.

If your systems are already experiencing drift, adding AI agents doesn’t fix the underlying problems. It amplifies them.

The AI executes bad workflows faster. It makes decisions based on unreliable data more confidently. It operates without effective oversight because the governance structures were never established.

The Pattern: We’ve Heard This Promise Before

A decade ago, enterprise software vendors made a similar promise about no-code platforms.

The pitch: Anyone can configure workflows without technical expertise. Citizen developers will build the tools they need. Business users will customize platforms to match their processes.

Some companies succeeded. Most didn’t.

The companies that succeeded had three things in common:

Process ownership. Someone at the executive level owned whether the platform actually served business needs. Not just whether it was implemented, but whether it remained aligned with how work actually happened.

Data quality standards. Clear definitions of what “good data” meant, enforcement mechanisms for compliance, and consequences for teams that didn’t maintain standards.

Authority to say no. Someone who could reject requests for custom fields, workflows, or integrations that would add complexity without proportional value.

The companies that failed treated the platform as a technical solution to an organizational problem.

They assumed that configuring the right fields, building the right workflows, and training users would be sufficient. They didn’t establish governance structures. They didn’t enforce data quality. They didn’t have executive ownership of platform health.

Within 18 months, complexity accumulated. Data quality declined. Teams built shadow systems. The platform drifted from operational reality.

The barrier to success wasn’t technical. The technology worked. The barrier was operational discipline.

AI agents are the same bet with higher stakes.

The Vendor Promises vs. The Implementation Reality

The vendor messaging around AI agents emphasizes simplicity:

monday.com: “Any team member can configure, deploy, and direct AI agents, with no technical background required.”

ServiceNow: “AI that can reason through business processes autonomously, understanding context and making intelligent decisions.”

Salesforce: “Agentforce agents work across your entire operation—sales, service, marketing, commerce—without human intervention.”

HubSpot: “Agentic AI that handles customer interactions, qualifies leads, and manages relationships automatically.”

The technology might deliver on these promises. The AI is sophisticated. The natural language interfaces work. The autonomous execution is real.

But here’s the contradiction that reveals the operational reality:

If AI reduces complexity and eliminates the need for technical expertise, why are vendors simultaneously building massive implementation networks?

ServiceNow announced partnerships with Genesys, Fujitsu, and Infosys—adding thousands of newly trained consultants specifically to help customers implement AI.

Salesforce has been building the Agentforce Partner Network for months, recruiting implementation partners globally.

HubSpot is expanding its Solutions Partner Program to support AI deployments, adding specialized AI implementation tracks.

monday.com launched a managed marketplace where companies can hire pre-qualified AI agents that have already been configured and tested.

The vendors know what the marketing materials don’t say: Enterprise AI requires enterprise support.

Not because the technology is bad. Because organizational readiness varies dramatically, and most companies need help establishing the prerequisites for successful AI deployment.

Those prerequisites are:

- Clean, trustworthy data in core systems

- Well-defined processes that match operational reality

- Governance structures with executive ownership

- Operational discipline to maintain all three over time

Mid-market companies are particularly vulnerable because they don’t have the internal resources to establish these prerequisites without external help.

Why This Matters for Mid-Market Companies

Enterprise companies have resources that mid-market companies don’t:

Dedicated platform teams. People whose full-time job is maintaining CRM health, ERP data quality, or system governance.

Revenue operations functions. Teams that monitor how systems are being used, catch drift early, and enforce standards across departments.

Change management capacity. Resources to establish new processes, train teams properly, and ensure adoption doesn’t decline over time.

Implementation budget. The ability to hire consultant teams to configure AI agents, tune performance, and provide ongoing optimization.

Mid-market companies (50-500 employees, $10M-$100M revenue) typically don’t have any of these.

Your operations person manages the CRM in addition to three other responsibilities. You don’t have a dedicated RevOps function. Change management happens through department heads who are already stretched thin.

Which means when vendors promise that “anyone can configure AI agents with no technical background,” they’re not lying about the technology. They’re omitting the operational context.

The technology allows non-technical configuration. But successful deployment still requires:

- Someone who understands your business processes well enough to configure agents correctly

- Authority to enforce the data quality standards that agents depend on

- Ongoing supervision to catch when agents make decisions that don’t match business reality

- Governance to prevent agents from automating workflows that have drifted from how work actually happens

Without these, you’re deploying autonomous decision-making on top of systems that your teams already don’t trust.

Real-World Pattern: What Happens When AI Meets System Drift

I’m seeing this pattern now with mid-market clients evaluating AI agent deployments.

The conversation always starts the same way: “We want AI to help our team be more productive. The vendors say AI agents can automate routine work and let our people focus on high-value activities.”

The discovery process reveals the same underlying issues:

The sales team tracks real pipeline in a shared Excel file because they don’t trust the forecasts in Salesforce. Adding AI-powered deal scoring won’t help—the AI will score deals based on incomplete CRM data, not the actual pipeline in the spreadsheet.

Customer success maintains account health in Notion because the official CRM doesn’t have the fields they need to track relationship status effectively. AI-powered churn prediction won’t work—the signals that indicate churn risk are in Notion, not the system the AI can access.

Operations built workflows in Google Sheets because the ERP is too complex for daily use. AI-powered process automation will automate the workarounds instead of the official processes, institutionalizing the dysfunction.

In every case, the conversation ends the same way: “We need to fix the underlying systems first, then we can talk about AI deployment.”

Not because the AI technology is inadequate. Because deploying AI on top of drifting systems amplifies the problems instead of solving them.

The Question Mid-Market Companies Should Ask

Before responding to AI platform announcements with procurement requests or budget allocations, ask one question:

Are your core business systems working well enough that adding AI would amplify success instead of chaos?

Specific indicators that you’re ready for AI agents:

Your teams actually use the official systems. Sales reps update the CRM during customer calls, not afterward from personal notes. Operations executes work in the ERP, not in shadow spreadsheets. Customer success relies on the official platform for account health, not Notion databases.

Leadership trusts the data for decisions. When your CEO needs pipeline numbers for the board, they pull a CRM report—they don’t ask for “the real numbers” in a spreadsheet. Forecast accuracy is high because the data reflects reality.

You have governance structures in place. Someone at the executive level owns system health. Data quality standards exist and are enforced. There’s a process for evaluating requests to add complexity. Teams can’t create custom fields or workflows without approval.

Your systems match how work actually happens. Workflows were designed by observing real work, not by implementing consultant recommendations. Required fields align with information teams actually have at each stage. The system serves the work instead of creating compliance overhead.

If these conditions exist, AI agents can amplify your operational effectiveness.

If they don’t, fix the foundations first.

How to Prepare for AI Agent Deployment

If your assessment reveals that core systems aren’t ready for AI, the preparation work is the same work that should have happened after initial platform implementation:

Establish executive ownership of system health. Assign someone at the COO, VP Operations, or Head of RevOps level who owns whether systems serve business decisions. Not just whether they’re maintained, but whether they remain aligned with operational reality.

Simplify ruthlessly. Audit what’s actually being used. Remove unused fields, delete obsolete workflows, archive reports nobody opens. Complexity that accumulated over time creates data quality problems and makes AI training less effective.

Clean the data. Define what “complete” means for critical records. Enforce completeness standards. Deduplicate contacts and accounts. Standardize naming conventions. Budget 40-60 hours for a mid-market CRM or ERP.

Align workflows with reality. Shadow your teams. Watch how work actually happens. Redesign system workflows to match observed reality instead of idealized processes. Eliminate the gap that causes teams to build shadow systems.

Eliminate shadow systems. Don’t ban them through policy. Make the official system more useful than the workarounds. Migrate what’s valuable, discard what’s not. Rebuild trust through consistent delivery of accurate reports.

Establish data governance. Create standards for data quality. Assign accountability for enforcement. Define consequences for non-compliance. Prevent complexity from accumulating by requiring removal of something old when adding something new.

This work takes 8-16 weeks depending on how advanced the drift is.

It’s not optional preparation for AI. It’s the foundation that determines whether AI amplifies success or chaos.

Why Vendors Are Building AI on Drifting Foundations

The enterprise software vendors making these AI announcements aren’t wrong about the technology.

AI agents can plan work autonomously. They can reason through business processes. They can execute tasks without human intervention. The technology works.

But vendors have a business model problem.

They sell to companies at different stages of operational maturity. Some have clean data, defined processes, and strong governance. Most don’t.

If vendors made operational readiness a prerequisite for AI deployment, they’d exclude most of their addressable market. So they position AI agents as solutions that work regardless of underlying system health.

The implementation partner networks they’re building simultaneously reveal the truth: most companies need help getting ready.

ServiceNow’s partnerships with Genesys, Fujitsu, and Infosys aren’t just for AI configuration. They’re for the organizational work that precedes successful deployment.

Salesforce’s Agentforce Partner Network isn’t just selling AI implementation. They’re selling the governance frameworks and data quality remediation that make AI viable.

The vendors know that AI deployed on drifting systems fails. They’re preparing implementation networks to help customers fix the foundations while also deploying the AI layer.

For mid-market companies, that means:

If you can afford implementation partners to help establish governance, clean data, and align processes, AI agent deployment might succeed even if your current systems are drifting.

If you can’t afford that support, you need to fix system drift before deploying AI. Otherwise you’re adding autonomous decision-making to systems that human teams already don’t trust.

The Timeline: Fix Foundations, Then Deploy AI

For most mid-market companies, the realistic timeline looks like this:

Months 1-2: Assessment and ownership

- Audit current system health (adoption, data quality, shadow systems)

- Establish executive ownership of system health

- Define what “AI-ready” means for your organization

Months 3-4: Simplification and cleanup

- Remove unused complexity from core systems

- Clean data (dedupe, standardize, complete records)

- Document actual workflows vs. system workflows

Months 5-6: Alignment and governance

- Redesign system workflows to match reality

- Establish data quality standards and enforcement

- Eliminate shadow systems by making official systems useful

Months 7-8: Trust rebuilding

- Deliver consistently accurate reports

- Prove systems can be trusted for decisions

- Train teams on simplified, aligned systems

Month 9+: AI deployment from strength

- Begin AI agent deployment with solid foundations

- Agents learn from clean data

- Automation executes correct workflows

- Governance structures supervise autonomous decisions

This timeline assumes moderate system drift. If drift is advanced (18+ months since implementation, extensive shadow systems, leadership completely stopped trusting data), add 2-4 months.

Frequently Asked Questions

Q: Should mid-market companies avoid AI agents entirely?

A: No. AI agents will deliver real productivity gains for companies with operational foundations in place. The question is timing: deploy AI after fixing system drift, not as a solution to it. Companies with clean data, defined processes, and governance structures should evaluate AI agents now. Companies still using Excel for real work should fix that first.

Q: How do I know if our systems are ready for AI agent deployment?

A: Ask these questions: Do teams actually use official systems for daily work, or maintain shadow trackers? Does leadership trust system data for board reports, or ask for “real numbers” elsewhere? Do you have executive-level ownership of system health? Can you enforce data quality standards? If the answers are yes, you’re ready. If no, you’re not.

Q: What’s the difference between AI agents and regular automation?

A: Regular automation executes predefined workflows (“if this happens, do that”). AI agents make autonomous decisions based on learned patterns (“analyze the situation, determine the best action, execute it”). AI agents require higher quality data and stronger governance because they’re making decisions, not just following rules. Bad automation executes wrong steps. Bad AI agents make wrong decisions at scale.

Q: Why are vendors promising simplicity while building consultant networks?

A: The technology is simple to configure—that part is true. The organizational readiness varies dramatically. Vendors build consultant networks because most companies need help establishing data governance, cleaning historical data, and aligning processes before AI deployment succeeds. The contradiction reveals that “simple to configure” doesn’t mean “simple to deploy successfully.”

Q: Is this pattern specific to CRM, or does it apply to all enterprise systems?

A: This applies to any enterprise system where AI agents will operate: CRM, ERP, HRM, project management, customer service platforms. AI agents require clean data and defined processes regardless of which system they’re automating. System drift (gradual deterioration after implementation) affects all enterprise platforms, not just CRM.

Q: How much does it cost to fix system drift before deploying AI?

A: For mid-market companies, fixing moderate drift typically costs $25K-$75K in consulting/implementation work plus 8-16 weeks of internal time. This includes data cleanup, workflow redesign, governance establishment, and trust rebuilding. Advanced drift (18+ months) can cost $40K-$95K and take 12-20 weeks. Compare this to the cost of deploying AI on broken systems: wasted AI licensing, incorrect autonomous decisions, and lost productivity from automating wrong workflows.

Q: What if my vendor says their AI doesn’t require clean data?

A: AI that “doesn’t require clean data” either (a) has extremely limited capabilities, or (b) is marketing language obscuring the truth. All AI learns patterns from data. If the data is incomplete, inconsistent, or inaccurate, the patterns the AI learns will be wrong. Vendors may offer data cleaning as part of AI implementation, but someone still has to do that work—it’s just bundled differently.

About TDEOS

TDEOS helps mid-market companies assess whether their systems are ready for AI agent deployment and fix the foundations when they’re not.

We work with organizations (50-500 employees) in healthcare, nonprofits, financial services, and professional services that are evaluating AI agents but need to address system drift first.

Common situations we address:

- Teams using Excel/Notion/Google Sheets instead of official systems

- Leadership doesn’t trust system data for decisions

- Evaluating AI agents but current systems are drifting

- Need assessment of whether systems are AI-ready

- Vendors promising AI will solve adoption problems

About the Author

Raman Arora is the founder of TDEOS (The Digital Enterprise Operating System), a CRM and digital transformation consultancy based in Cincinnati, Ohio, serving mid-market organizations nationwide. With 22+ years of Fortune 500 operations experience at GE, Paycor, Dell, Farmers and more. Raman specializes in helping financial services, nonprofits, healthcare, and professional services firms close the gap between technology investment and measurable business outcomes. He is a monday.com certified partner and Make.com certified consultant.

Related Resources

Articles on System Drift:

CRM Drift: Why CRM Systems Fail After Implementation

https://tdeos.com/crm/crm-drift/

Shadow CRM Systems: When Teams Build Their Own Tracking Tools

https://tdeos.com/crm/shadow-crm/

Why Leadership Stops Trusting the CRM

https://tdeos.com/when-leadership-stops-trusting-the-crm/

The Year After Go-Live: Why Success Turns Into Struggle

https://tdeos.com/the-year-after-go-live-why-crm-success-turns-into-crm-struggle/

Services:

CRM Consulting Overview

https://tdeos.com/crm/

Digital Transformation Consulting

https://tdeos.com/